Image sensors are the key component of any camera and vision system. This blog summarizes the key concepts of a tech brief addressing concepts essential to sensor performance relative to imaging applications. For a comprehensive analysis of the parameters, you may read the full tech brief.

While there are many aspects to consider, here we outline 6 key parameters:

- Physical parameters

Resolution: The amount of information per frame (image) is the product of horizontal pixel count x by vertical pixel count y. While consumer cameras boast of resolution like car manufacturers tout horsepower, in machine vision one just needs enough resolution to solve the problem – but not more. Too much resolution leads to more sensor than you need, more bandwidth than you need, and more cost than you need. Takeaway: Match sensor resolution to optical resolution relative to the object(s) you must image.

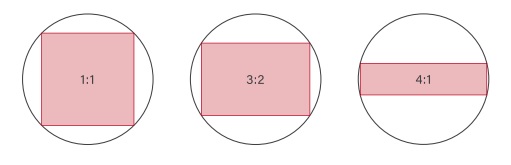

Aspect ratio: Whether 1:1, 3:2, or some other ratio, the optimal arrangement should correspond to the layout of your target’s field of view, so as not to buy more resolution than is needed for your application.

Frame rate: If your target is moving quickly, you’ll need enough images per second to “freeze” the motion and to keep up with the physical space you are imaging. But as with resolution, one needs just enough speed to solve the problem, and no more, or you would over specify for a faster computer, cabling, etc.

Optical format: One could write a thesis on this topic, but the key takeaway is to match the lens’ projection of focused light onto the sensor’s array of pixels, to cover the sensor (and make use of its resolution). Sensor sizes and lens sizes often have legacy names left over from TV standards now decades old, so we’ll skip the details in this blog but invite the reader to read the linked tech brief or speak with a sales engineer, to insure the best fit. - Quantum Efficiency and Dynamic Range:

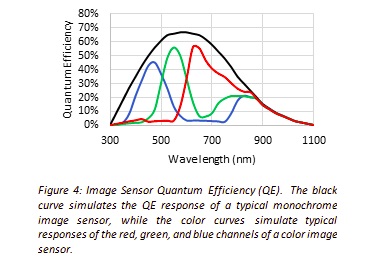

Quantum Efficiency (QE): Sensors vary in their efficiency at converting photons to electrons, by sensor quality and at varying wavelengths of light, so some sensors are better for certain applications than others.

Dynamic Range (DR): Factors such as Full Well Capacity and Read Noise determine DR, which is the ratio of maximum signal to the minimum. The greater the DR, the better the sensor can capture the range of bright to dark gradations from the application scene. - Optical parameters

While some seemingly-color applications can in fact be solved more easily and cost-effectively with monochrome, in either case each silicon-based pixel converts light (photons) into charge (electrons). Each pixel well has a maximum volume of charge it can handle before saturating. After each exposure, the degree of charge in a given pixel correlates to the amount of light that impinged on that pixel.

- Rolling vs. Global shutter

Most current sensors support global shutter, where all pixel rows are exposed at once, eliminating motion-induced blur. But the on-sensor electronics to achieve global shutter have certain costs associated, so for some applications it can still make sense to use rolling shutter sensors.

- Pixel Size

Just as a wide-mouth bucket will catch more raindrops than a coffee cup, a larger physical pixel will admit more photons than a small one. Generally speaking, large pixels are preferred. But that requires the expense of more silicon to support the resolution for a desired x by y array. Sensor manufacturers work to optimize this tradeoff with each new generation of sensors.

- Output modes

While each sensor typically has a “standard” intended output, at full resolution, many sensors offer additional switchable outputs modes like Region of Interest (ROI), binning, or decimation. Such modes typically read out a defined subset of the pixels, at a higher frame rate, which can allow the same sensor and camera to serve two or more purposes. Example of binning would be a microscopy application whereby a binned image at high speed would be used to locate a target blob in a large field, then switch to full-resolution for a high-quality detail image.

For a more in depth review of these concepts, including helpful images and diagrams, please download the tech brief.

1st Vision’s sales engineers have an average of 20 years experience to assist in your camera selection. Representing the largest portfolio of industry leading brands in imaging components, we can help you design the optimal vision solution for your application.