3D laser profiling is widely used in diverse industries and applications. There are a number of mature offerings and periodic next generation innovations. So what would it take to convince you to take a look at the value proposition for AT – Automation Technology’s C6 Series? In particular the C6-3070, the fastest laser triangulation laser profiler on the market.

AT says that “C6 Series is an Evolution. C6-3070 is a Revolution”. Let’s briefly review the principles of laser profile scanning, followed by what makes this particular product so compelling.

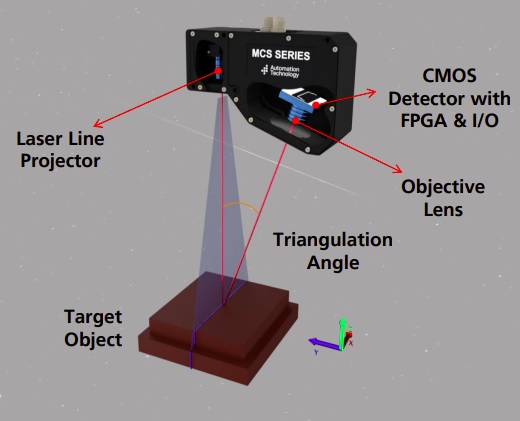

What are the distinguishing characteristics of each item labeled in the above diagram?

- Target object: An item whose height variations we want to digitally map or profile

- XYZ guide: The laser line paints the X dimension; each slice is in the Y dimension; height correlates to Z

- Laser line projector: paints the X dimension across the target object

- Objective lens: focuses reflected laser light

- CMOS detector: array of pixel wells, or pixels, such that for each cycle, the electronic value of a pixel scales with the height value of the geometrically corresponding position on the target object

- FPGA and I/O circuitry: provide the timing, the smarts, and the communications

The key to laser triangulation is that the triangulation angle varies in direct correlation with the height variances on the target object that reflects the projected laser light through the lens and onto the detector. It’s “just geometry” – though packaged of course efficiently into the embedded algorithms and precisely aligned optics.

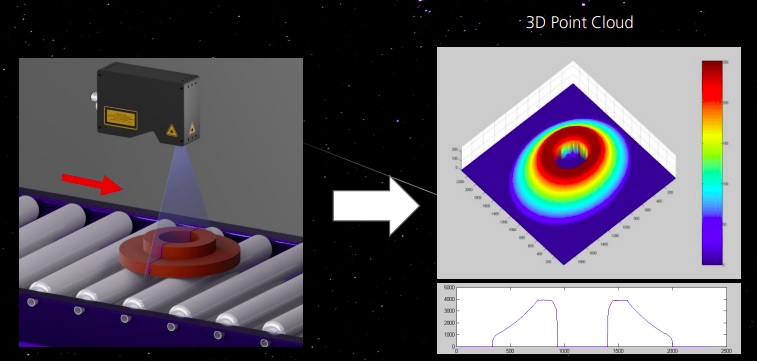

The goal in 3D profile scanning is to build a 3D point cloud representing the height profile of the target object.

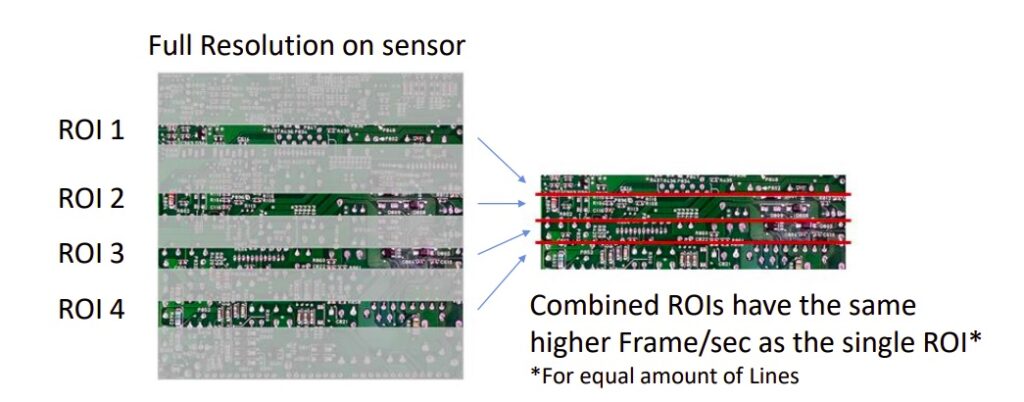

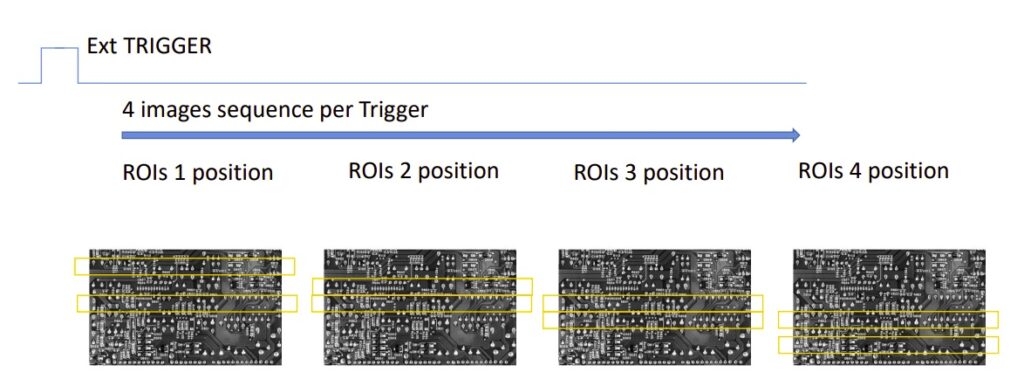

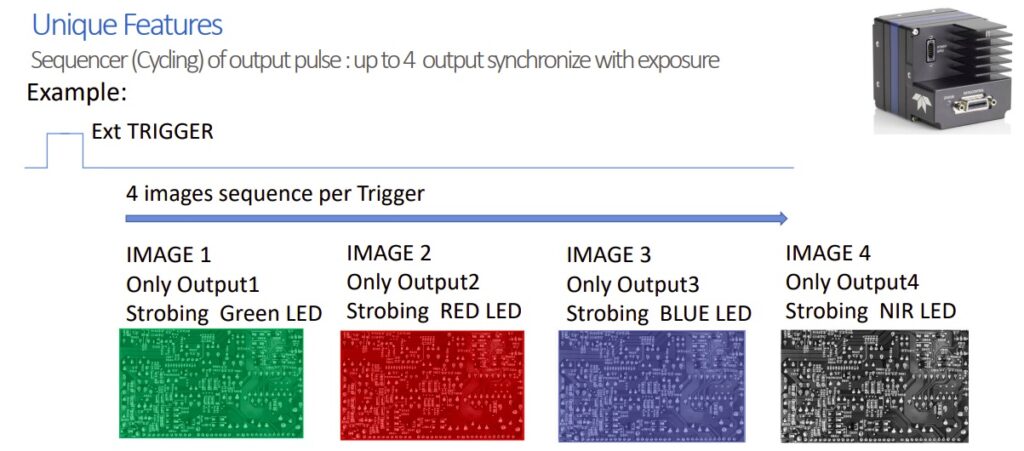

Speed and Resolution: 200kHz @ 3k resolution. That’s the fastest on the market. This is due to AT’s proprietary sensor WARP – Widely Advanced Rapid Profiling. How does it work?

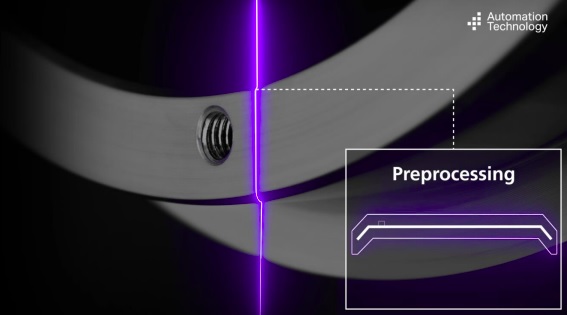

The C6-3070 imager has on-board pre-processing. In particular, it detects the laser line on the imager, so that only the part of the image around the laser line is transferred to the FPGA for further processing. This massively reduces the volume of data needing to be transferred, but focusing on just the relevant immediate neighborhood around the laser line. Which means more cycles per second. Which is how 200kHz at 3k resolution is attained.

Modularity: When Henry Ford introduced the Model T, he is famously attributed to have said “You can have it any color you like, as long as it’s black.” Ford achieved economies of scale with a standardized product, and almost all manufacturers follow principles of standardization for the same reason.

But AT – Automation Technology’s C6 Series is modular by design – each component of an overall system offers standard options. There are no minimum order quantities, no special engineering charges, and lead times are short because the modular components are pre-stocked.

For example:

- Laser options (blue, red laser class: 2M, 3R, 3B)

- X-FOV (Field Of View) from 7mm to 1290 mm

- Single or dual head sensors

- Sensor parameters offer customizable Working Distance, Triangulation Angle, and Speed

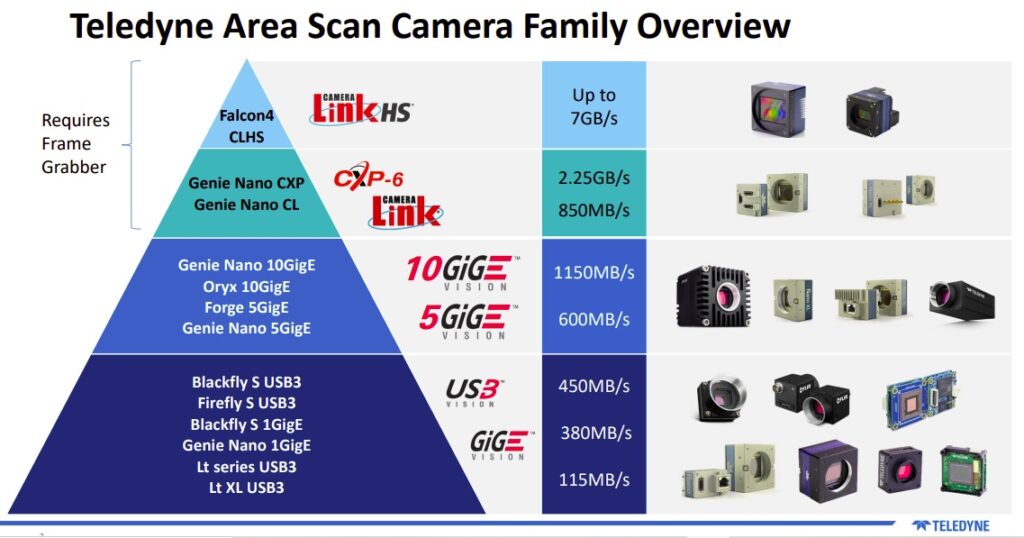

Software: The cameras may be controlled by many popular third party software products, as the are GigE-Vision / Genicam 3.0 compliant. Or you may download the comprehensive and free AT Solution Package, optimized for use with AT’s IR cameras. The SDK is C-based API with wrappers for C++, C# and Python.

Besides the SDK itself, users may want to take advantage of the Metrology Package. The Metrology Package provides a toolset for evaluating measurement results.

Pricing: You might think that a product asserted to be the fastest on the market would come at a premium price. In fact AT’s 3D profilers are priced so competitively that they are often price leaders as well. At the time of writing, they certainly lead on (price : performance) in their class. Call us at 978-474-0044.

1st Vision’s sales engineers have over 100 years of combined experience to assist in your camera and components selection. With a large portfolio of lenses, cables, NIC card and industrial computers, we can provide a full vision solution!