Product innovation continues to serve machine vision customers well. Clever designs are built for evolving customer demands and new markets, supported by electronics miniaturization and speed. Long a market leader in line scan imaging, Teledyne DALSA now offers the Linea HS2 TDI line scan camera family.

Video overview

The video below is just over one minute in duration, and provides a nice overview:

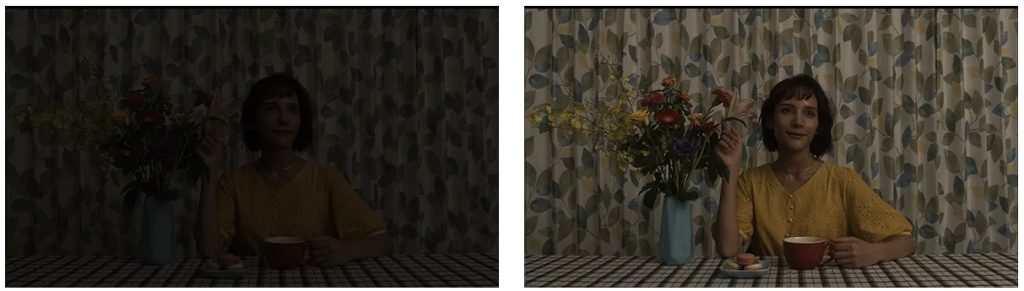

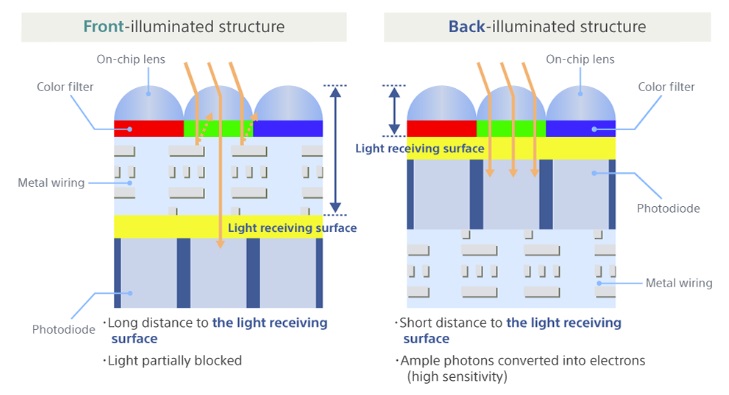

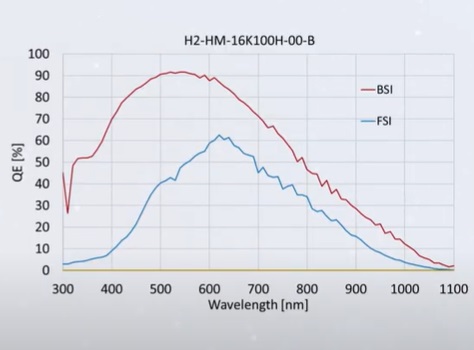

Backside illumination enhances quantum efficiency

Early sensors were all used frontside illumination, and everybody lived with that until about 10 years ago when backside illumination was innovated and refined. The key insight was to let the photons hit the light-sensitive surface first, with the sensor’s wiring layer on the other side. This greatly improves quantum efficiency, as seen in the graph below:

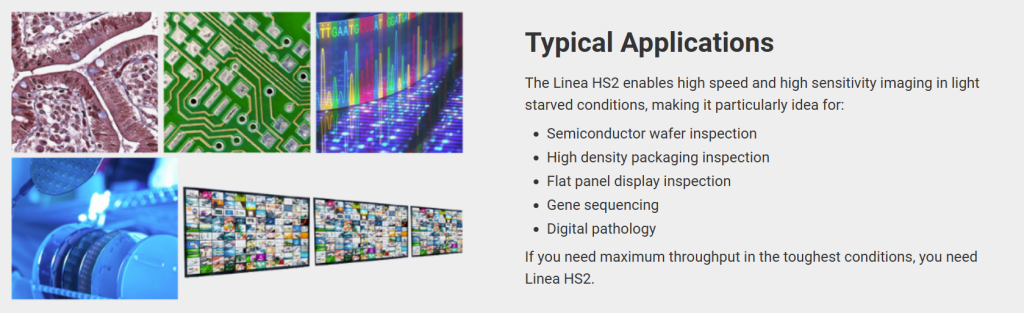

Applications

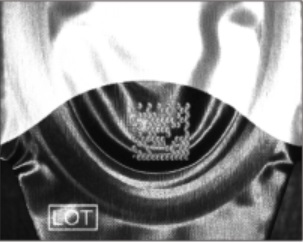

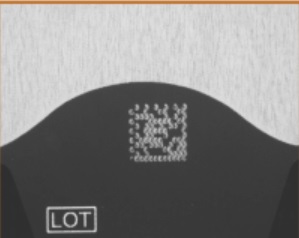

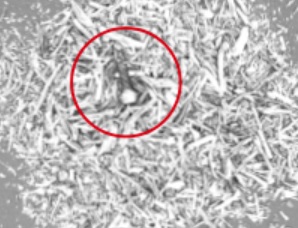

This camera series is designed for high-speed imaging in light staved conditions. Applications include but are not limited to inspecting flat panel displays, semiconductor wafers, high density interconnects, and diverse life science uses.

Line scan cameras

You may already be a user of line scan cameras. If you are new to that branch of machine vision, compare and contrast line scan vs. area scan imaging. If you want the concept in a phrase or two, think “slice” or line of pixels obtained as the continuous wide target is passed beneath the camera. Repeat indefinitely. Can be used to monitor quality, detect defects, and/or tune controls.

Time Delay Integration (TDI)

Perhaps you even use Time Delay Integration (TDI) technology already. TDI builds on top of “simple” line scan by tracking how a pixel appears across several successive time slices, turning motion blur into an asset through hardware or software averaging and analysis.

Maybe you already have one or more of Teledyne DALSA’s prior-generation Linea HS line scan cameras. They feature the same pixel size, optics, and cables as the new Linea HS2 series. With a 2.5x speed increase the Linea HS2 provides a seamless upgrade. The Linea HS2 offers an optional cooling accessory to enhance thermal stability.

Frame grabber

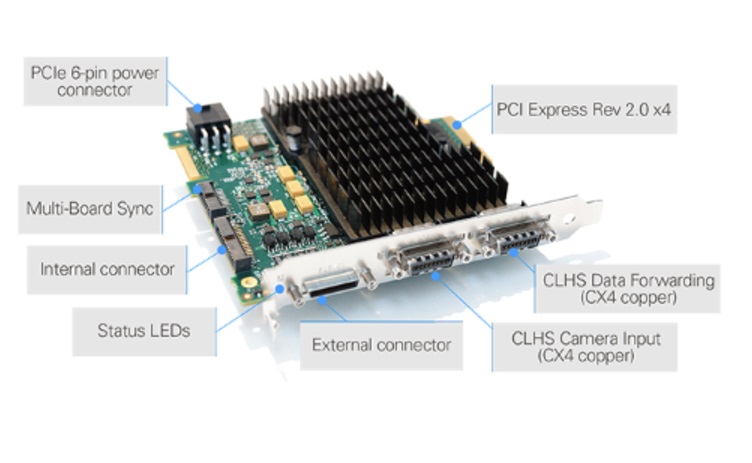

The Linea HS2 utilizes Camera Link High Speed (CLHS) to match the camera’s data output rate with an interface that can keep up. Teledyne DALSA manufactures not just the camera, but also the Xtium2-CL MX4 Camera Link Frame Grabber.

The Xtium2-CL MX4 is built on next generation CLHS technology and features:

- 16 Gigapixels per second

- dual CLHS CX4 connectors

- drives active optical cables

- supports parallel data processing in up to 12 PCs

- allows cable lengths over 100 meters with complete EMI immunity

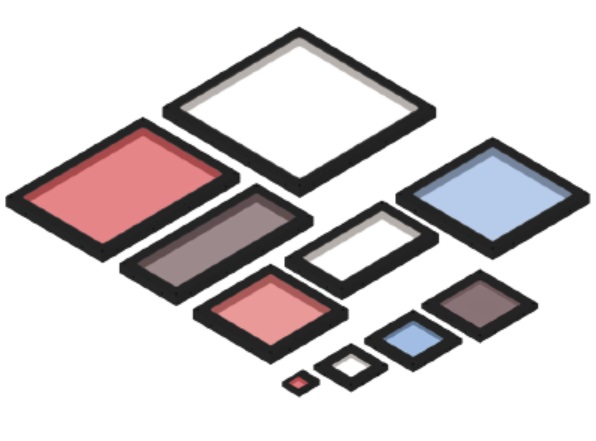

Which camera to choose?

As this blog is released, the Linea HS2, with 16k/5μm resolution provides an industry leading maximum line rate of 1 MHz, or 16 Gigapixels per second data throughput. Do you need the speed and sensitivity of this camera? Or is one of the “kid brother” models enough – they are already highly performant before the new kid came along. We can help you sort out the specifications according to your application requirements.

1st Vision’s sales engineers have over 100 years of combined experience to assist in your camera and components selection. With a large portfolio of cameras, lenses, cables, NIC cards and industrial computers, we can provide a full vision solution!

About you: We want to hear from you! We’ve built our brand on our know-how and like to educate the marketplace on imaging technology topics… What would you like to hear about?… Drop a line to info@1stvision.com with what topics you’d like to know more about